Of Cattle and Pets

And Bessie the friendly cow, and that ratty cat you never really liked.

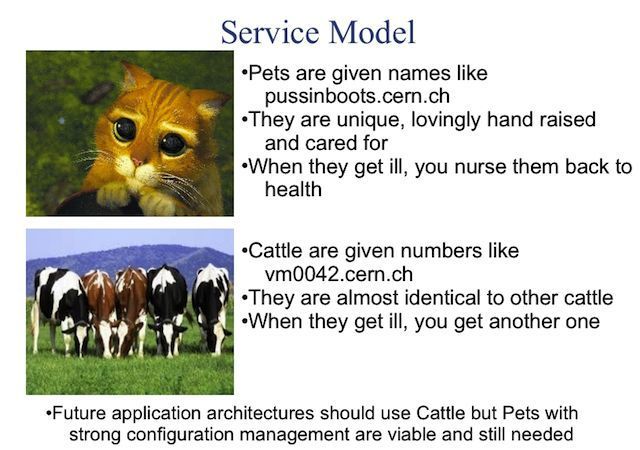

I have been thinking a lot about cattle and pets lately (in the IT sense). If you are not aware of the concept, it's something that Randy Bias (from EMC/Cloudscaling) coined and the admins at CERN made popular. It basically says, you can treat your servers like pets or like cattle.

It's a great simple way to think about how you run your servers. The point is that managing cattle is nice. It makes services simple to manage, develop, and scale and it's easier to make them fault-tolerant.

So, what's new?

I think that the cattle/pet separation deserves some more fleshing out, so, I drew this image (quite IT consultant-like right? :))

First of all, being a pet or cattle isn't black or white, it's a spectrum. On the extreme pet side you have a manually set up a server (or 10) that you haven't backed up, and can't afford to lose. On the extreme cattle side you have automation that simply logs and trends failed servers, and recovery is automatic, invisible to the end-users, and something mere humans shouldn't meddle with.

Secondly, I broadened the scope a bit. Thinking about services is more useful than single servers. Your service is (or should be!) made up of several servers. The question is how to manage them collectively like cattle. There will be variance between servers that make up your service.

Finally, it's not only about virtual machines. The pet/cattle spectrum applies if you run your service on VMs, physical servers, in docker containers, etc. For simplicity, I'll call all of these "servers" collectively.

To simplify, I'd define a service's cattleness as an inverse of the amount of annoyance you suffer when a server goes down.

But what's required to make your servers move towards being cattle? The two main things are

- Service architecture - How do you avoid single points of failure and make servers replaceable?

- Automation - When servers go down, can you easily get them up again?

The cattle drivers (pun intended) and requirements

I've tried to break down the most important factors determining how far along the scale you can/should/are forced to move.

Operational maturity

(Because consultant-like images need consultant-like terms).

This was the best term I could think of for how to run operations. Service architecture design is an important part of this. How do you avoid single points of failure in your service? Do you know where your service keeps all its state? Do you have monitoring, alerting, and trending set up? When taking new components into use, do you consider how they will affect the maintainability of the service? The higher up the cattle scale you climb, the better you need to be at this.

IT skills

This might be obvious, but you need to have quite a good team of IT people for this. For operations people coding skills are necessary. There are a ton of tools you should be able to handle. Some common ones are Ansible, git and Jenkins. You also need to understand the nuts and bolts of the system to run it well. The more cattle-like you go, the more skills you (or your team) need to handle.

Investment (as if effort)

You have to put in effort to move up the scale. The main idea is that you invest effort now to reduce work later. This will be a limiting factor how far along the scale it's reasonable to move. There's no point in putting a lot of effort in automating things that will never pay back the time spent automating it.

Scale

When your scale grows, you have to move towards cattle. One can't manage large systems as pets. The bigger you are, further you have to go. To be really far towards the cattle end of things, your service probably has to be decently large, due to the required investment into the service.

Other things that matter

We're missing some obvious things that affect how easy it's to move towards cattle. However, these are often quite static once chosen, and hence weren't taken up with the other things.

The first is of course the software that the service is made up of. Is it well designed? Does it keep state separate in a sensible way? Are the stateless components scalable and interchangeable? Modern good software reduces the effort to move towards cattle.

The second thing I mentioned in the beginning. It's the platform you're running on. It's much easier to run cattle on an IaaS cloud than on bare metal or traditional VMs, and a good PaaS layer makes it even easier.

But, what should I aim for? Bessie?

The first thing is to have a clue where you are on the scale. For each server type you have, what happens if it goes down? How does it affect your service and how long doest it take to recover. Can you improve on this? There are probably some easy things to fix, like automating the configuration of the servers if you don't do it already. Think reducing your annoyance if a server goes down.

I think that a good rule of thumb is try to move towards cattle for as long as it makes sense. How far you can go depends on the aforementioned factors.

For example, you're setting up a pilot for a few months? You probably don't want to go far towards cattle. The investment just won't pay itself back.

On the other hand, a two-man startup team with experience can probably go far towards cattle, even if their scale is small. They are experienced, they can choose a modern platform and they're working with modern methods. All this reduces the investment cost.

Moving towards cattle will take time and energy, but if you're in a position to think about medium and long term, it will pay itself back. Even if you move just a little. If you can affect the deployment platform or software components in the early stages, it's a great way to reduce the required effort to move up the scale.

Predictions for the future

The deployment platforms will keep growing more advanced and feature-rich. A lot of new software will also be better designed when it comes to cattleness.

This means that the effort to move towards cattle will be greatly reduced for new services. As more responsibilities move to the deployment platform, the operational maturity requirements will probably also drop. However, the IT skill requirements will become even more pronounced with new technologies.

Geek. Product Owner @CSCfi